Introduction

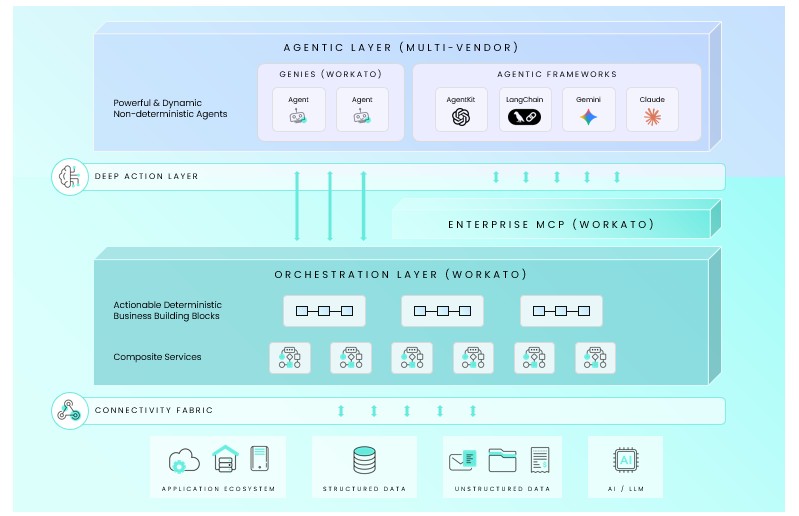

Generative AI became very popular among consumers after ChatGPT was released in late 2022. However, enterprises struggled to operationalize Generative AI because every tool or data source needed custom integrations. In late 2024, Anthropic introduced the Model Context Protocol (MCP), providing enterprises with a solution for connecting data and tools. MCP replaced model-specific integrations with a standard interface for connecting tools, data, and services. The promise of a universal interface built on common standards is so compelling that it is frequently described as the USB-C or TCP/IP for AI applications. While this new connectivity standard is encouraging, MCP alone is insufficient to ensure the safe enterprise readiness of AI agents. While MCP is a valuable innovation for addressing connectivity issues, it does not address security and governance requirements. This write-up introduces the concept of “Enterprise MCP”, an architectural control layer that operationalizes MCP for enterprise environments. Workato provides one reference implementation of this approach (see Figure 1).

The TCP/IP of the Agentic era

MCP is an interesting innovation because it standardizes how agents connect to tools, context, and one another. In the pre-MCP world, life was not easy for the developer. For example, if you wanted Claude to access a PostgreSQL database, you had to write a custom integration. When switching from Claude to ChatGPT, rewriting the integration leads to increased technical debt and maintenance costs.

MCP standardizes this interaction through its protocol, which covers three core interactions: resources, prompts, and tools. By following this standard, any MCP client (the AI model/interface) can communicate with any MCP-compliant server (the tool/data source). Customers adopted the protocol to address diverse pain points, resulting in tens of thousands of MCP servers being deployed.

The standardization that accelerates adoption also amplifies risk when acting autonomously.

Autonomous AI Changes the Rules of Risk

While MCP facilitates the integration of AI tools and services, the resulting efficiency gains are associated with assumed business risks. The key difference is that AI systems can determine how to achieve goals, whereas traditional systems follow a predefined path.

Traditional systems can be compared to a vending machine, where you press a specific button to get a desired result. A customer must remain within the system’s boundaries; there is no way to exceed the system’s design limits. As a result of defined outcomes, risk is easier to manage.

On the other hand, AI agents using MCP are more akin to personal shoppers. Without specific instructions, the personal shopper is given a broad goal and access to resources. For example, let’s assume the individual shopper is given a credit card and broad instructions such as “Buy groceries for a week”. Now, let us consider that the personal shopper is free to buy any brand, visit any aisle, and spend as much as they like. For an enterprise, such freedom translates to an unacceptable risk. If this shopper were a digital AI agent, it could be manipulated or compromised into buying far more than intended.

As a result, AI agents using MCP deliver value but are risky in the absence of guardrails. Those guardrails must be built into the AI architecture rather than relying on traditional system controls.

Operationalizing MCP for the Enterprise

A helpful way to compare the out-of-the-box MCP and the Enterprise MCP is to compare bare electrical wiring with a standard wall outlet.

The out-of-the-box MCP is like connecting to exposed electrical wiring. It requires experts to connect those wires to devices, while there is no protection against power surges. While such an approach to electricity delivery is possible, no organization would accept it due to the associated risks.

On the other hand, let us compare the Enterprise MCP with a wall outlet that provides electricity safely. Wiring is concealed and integrated into the building infrastructure. The user benefits from power without needing to understand or manage the underlying risk. Just as an organization would consider exposed wiring for electrical delivery unacceptable, employees connecting critical systems via custom scripts are also unsafe.

Enterprise MCP enables agentic automation through a control plane that ensures security, governance, and operational discipline.

Inside Workato’s Enterprise MCP Framework

The benefits of AI in enterprises include automating core business processes without introducing risks. An example of an AI error was a chatbot promising a customer an out-of-policy retroactive refund, and the courts ordered the airline to honor the amount determined by the chatbot’s hallucinated advice. This incident demonstrates that autonomous AI actions can have real legal and financial consequences.

To prevent these outcomes, Workato’s Enterprise MCP Framework acts as a managed control layer between the AI model and applications by focusing on three things: trust, context, and skills, as described below:

Trust: To accomplish this, Workato enforces runtime user authentication—meaning agents inherit the user’s actual permissions rather than operating under shared service accounts. For example, if a marketing representative asks an agent to access customer data, the agent can see only what they are authorized to access under their role-based access controls.

Security is built into the platform architecture by Enterprise MCP in multiple ways. For example, field-level security controls determine which data can enter the LLM context, and sensitive PII data is obfuscated before agents see it. At the same time, agent actions are logged with complete traceability. Taken together, these security aspects ensure full compliance with solution requirements.

Context: AI systems hallucinate when they lack access to current, accurate data from systems of record. But simply giving agents access to individual applications isn’t enough—they need orchestrated context across systems. For example, when a sales agent generates a quote, they need access to ERP, billing, and pricing rules from contract management, as well as credit approval, not just CRM.

Workato provides this orchestrated context through a unified integration fabric that connects to thousands of enterprise applications. Rather than agents making multiple fragmented API calls and trying to reconcile data inconsistencies, they receive a complete, accurate context assembled from across your system landscape. This eliminates hallucinations caused by stale or incomplete data and ensures that agents make decisions based on the current state of your business.

Skills: A Workato skill is a business workflow packaged as a single capability that an agent can invoke. This is fundamentally different from granting agents raw API access and expecting them to figure out complex processes.

Skills ensure the execution and delivery of results when agents invoke them in context. A single skills package delivers accuracy, encapsulating all necessary features, such as business logic, approval workflows, and compliance checks. An example is a standard process refund skill that accesses all the systems required to complete the task. Other examples of common skills include onboarding new hires and approving expenses, increasing AI adoption efficiency across the board.

Workato’s Enterprise MCP enables critical workflows to be executed in a predictable, governed way, resulting in AI automation that is more secure and reliable, and easier for IT to control at scale. While many organizations are still piloting agentic AI, some enterprises are already seeing measurable impact from agents built on Enterprise MCP, as shown in the examples below.

1. At a global cybersecurity company, quote generation time was reduced by approximately 10x by allowing agents to use governed workflows across CRM and ERP systems. Pricing policies and credit limits were enforced at execution time, preventing policy violations despite increased automation.

2. 40% of helpdesk interactions were automated using skills with agents using a connected operations platform. Each skill is executed while maintaining the same security and compliance standards regardless of whether an agent or a human operator invokes it.

These are production deployments where agents handle real transactions with real business consequences. What is the difference between these successes and the 95% of AI projects that fail? Enterprise MCP provided the trust, context, and skills necessary to ensure the safety of autonomous operation.

Conclusion

MCP standardizes access to tools and data, which reduces integration overhead. However, production deployments indicate that protocol standardization only addresses the connectivity layer. Production-grade agents for fundamental business processes require verifiable trust, orchestrated context, and reusable skills. These capabilities are essential in helping enterprises move from pilot AI projects to production workloads.

With a complete control plane, enterprise MCP turns standard MCP connectivity into enterprise-ready orchestration. To optimize value from AI agents, organizations must move beyond experimentation and deploy agents in core business processes. Agentic AI must use the Enterprise MCP, with the governance, security, and reliability controls required for production environments.